Information Management and Preservation

Information and Software Engineering Group (IFS)Institute of Software Technology and Interactive Systems (ISIS)

Vienna University of Technology

Astera - A Generic Model for Multimodal Information Retrieval

My PhD research is on designing a generic model for semantic multimodal information retrieval, named Astera. Finding useful information from large multimodal document collections such as the WWW is one of the major challenges of Information Retrieval (IR). The many sources of information now available - text, images, audio, video and more - increases the need for multimodal search. Particularly important is also the recognition, that each information item is inherently multimodal (i.e. has aspects in its information character that stem from different modalities) and forms part of a networked set of related information items.

In Astera, I model multimodal domain specific collections (like music, patent or medical collection) with the help of different relation types, and enrich the available data by extracting inherent information in the form of facets . This model is under test with ImageCLEF 2011 multimodal collection.

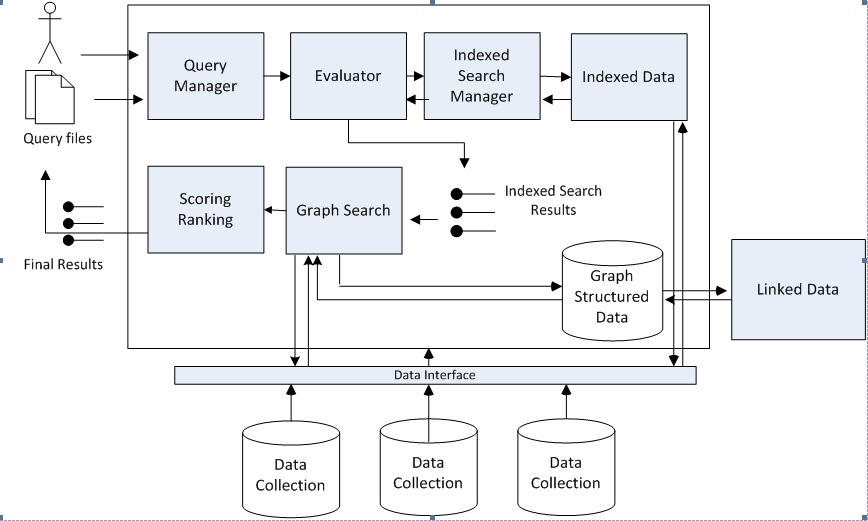

As shown in Figure 1, we use a hybrid retrieval method which consists of two steps: 1) In the first step, we perform an initial search with Lucene and use these initial result list. 2) In the second step, using the first set of data objects, as seeds, we exploit the graph weighted edges from the initiating points for a number of steps. We perform the spreading activation and at the end recompute the ranked result based on the activation value nodes receive via propagation.

Collaboration

Astera is an open-source project under GPL licence, version 2.0. You can find the project source here. Astera has contributed to Mucke project. They are collaborating projects and benifit from each other components.Astera has a mudular design and development. In case you were interested with new ideas or code collaboration, just contact me.

Publications

- Sabetghadam S., Lupu M., Rauber A.,"Astera - A generic model for Multimodal Information Retrieval", Proc. of Integrating IR technologies for Professional Search Workshop, 2013

- Sabetghadam S., Lupu M., Bierig R., and Rauber A., "A combined approach of structured and non-structured IR in multimodal domain”. In Proc. of International Conference on Multimedia Retrieval, ICMR'14, 2014.

- Sabetghadam S., Lupu M., Bierig R., and Rauber A., "A Hybrid Approach for Multi-Faceted IR in Multimodal Domain". CLEF, 2014.

- Sabetghadam S., Lupu M., and Rauber A., "Which one do you choose? Spreading Activation or Random Walks?". Submitted to IRFC, 2014.